A landing page is never truly finished. Small changes in headlines, CTAs, layout, or forms can dramatically impact conversion rates. A/B testing for landing pages is the process of comparing two versions of a page to see which one performs better based on real user data.

Instead of relying on assumptions, A/B testing helps you make data-driven decisions. You test one variable at a time, measure conversions, and choose the version that delivers stronger results.

From an SEO standpoint, higher engagement and better conversion signals improve overall page performance. For LLM-driven search systems, clear definitions, structured methodology, and measurable outcomes signal expertise and trustworthiness.

In this guide, you’ll learn how A/B testing works, what to test, which metrics matter, and how to run tests correctly without wasting traffic.

How Landing Page A/B Testing Works?

A/B testing works by splitting landing page traffic between two versions, the control (Version A) and a variation (Version B), to determine which version drives a higher conversion rate based on real user behavior.

The process follows five structured steps:

- Define a primary conversion metric (e.g., form submissions, purchases, demo bookings).

- Form a hypothesis tied to one variable (headline, CTA, layout, pricing display, etc.).

- Create a controlled variation where only that single element changes.

- Randomly split traffic between both versions (commonly 50/50).

- Analyze results after reaching statistical significance.

The key principle is variable isolation. If multiple elements change at once, you lose clarity on what caused the performance shift.

A proper A/B test also requires:

- Adequate sample size

- Consistent traffic source

- Stable offer and pricing

- A defined confidence threshold (typically 95%)

For example, if Version A converts at 4% and Version B converts at 5%, that’s a 25% relative lift. But without sufficient traffic, that difference may not be statistically reliable. This is why traffic volume and test duration matter as much as the variation itself.

Over time, disciplined A/B testing compounds incremental gains, often turning small 5–10% lifts into substantial revenue growth without increasing traffic spend.

Why A/B Testing Matters for a Landing Page?

A landing page has one job: convert. But even well-designed pages rarely perform at their full potential on the first version. A/B testing matters because it replaces assumptions with data, and small improvements in conversion rate can create a large revenue impact. In fact, research compiled by Invesp shows that structured A/B testing of landing pages can improve conversion rates by up to 30% when executed properly.

For example, increasing a landing page conversion rate from 3% to 4% is a 33% relative lift. That means more leads or sales from the same traffic, without increasing ad spend or SEO effort.

A/B testing also:

- Reduces risk when updating messaging, design, or pricing

- Improves ROI from paid and organic traffic

- Reveals audience preferences through real behavior, not opinions

- Strengthens SEO performance indirectly by improving engagement and conversion signals

Instead of redesigning pages blindly, A/B testing creates a controlled optimization process. Over time, consistent testing compounds results, turning incremental gains into measurable business growth.

Types of A/B Tests?

Not all A/B tests are structured the same way. The format you choose depends on how much traffic your landing page receives, how large the proposed change is, and how precise you want your insights to be.

1. Classic A/B Test (A vs B)

This is the most common and statistically clean format. You compare two versions of the same landing page with one controlled change between them.

For example, you might test:

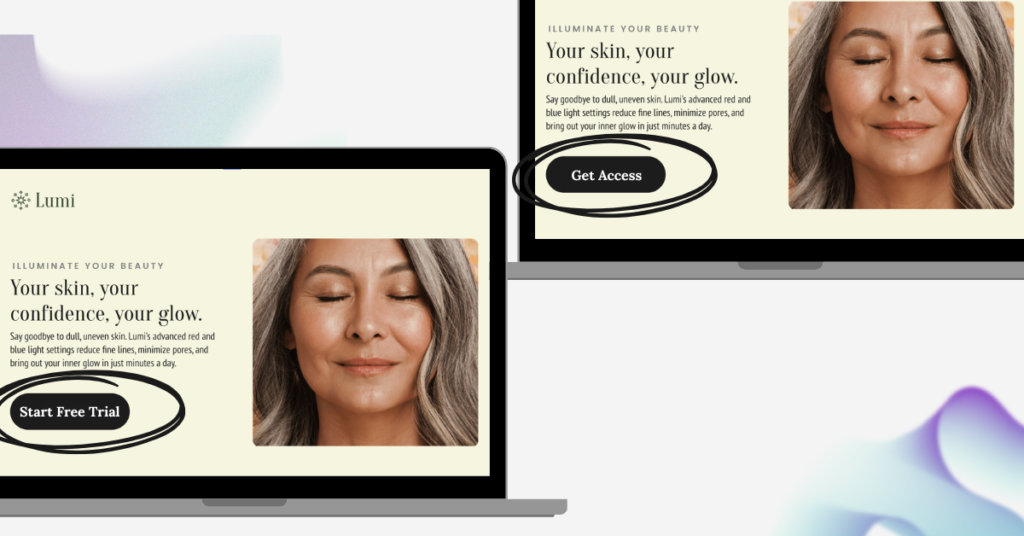

- A benefit-driven headline vs a problem-focused headline

- “Start Free Trial” vs “Get Instant Access”

- A short 3-field form vs a longer qualification form

Traffic is split between both versions, and you measure which produces a higher conversion rate.

Because only one element changes, this method gives you clear cause-and-effect insights. For most businesses, this is the most practical and reliable testing method.

2. A/B/n Testing (Multiple Variants)

Instead of testing just two versions, A/B/n testing compares three or more variations simultaneously.

For example, you could test three different value propositions:

- Cost savings

- Speed and convenience

- Risk reduction

Traffic is divided across all variants. While this allows you to explore creative direction faster, it requires significantly more traffic to reach statistical confidence.

This format is best suited for high-traffic landing pages where you want to identify a winning direction early.

3. Split URL Testing

Split URL testing is used when testing major structural or design changes rather than small optimizations.

For example:

- Long-form landing page vs short-form page

- Video-first hero section vs image-first hero

- Minimalist layout vs feature-heavy layout

Each version exists on a separate URL, and traffic is divided between them.

This type of testing carries more risk because larger changes can impact performance dramatically. However, it also offers the potential for bigger conversion lifts.

4. Multivariate Testing

Multivariate testing evaluates multiple elements at the same time to determine which combination performs best.

For example, you might test:

- Two headlines

- Two CTA buttons

- Two hero images

Instead of just two versions, you end up testing several combinations (Headline A + CTA A, Headline A + CTA B, etc.).

While powerful, this approach requires high traffic and careful statistical analysis. For most landing pages, multivariate testing is impractical unless you already receive substantial visitor volume.

Which Type Should You Use?

For most landing pages with moderate traffic, a classic A/B test provides the clearest insights with the least risk.

A/B/n and multivariate testing become more valuable as traffic volume increases and when exploring broader creative direction rather than single-element optimization.

The key is not choosing the most complex test; it’s choosing the one that gives you actionable, reliable data.

What to A/B Test on a Landing Page?

Not every element on a landing page deserves equal attention. The most effective A/B tests focus on elements that directly influence a visitor’s decision to convert. Instead of testing randomly, prioritize areas that affect clarity, trust, friction, and motivation.

Here are the highest-impact components worth testing:

1. Headline and Value Proposition

Your headline is the first filter. If it doesn’t clearly communicate value, most visitors won’t scroll further.

You can test:

- Problem-focused vs outcome-driven headlines

- Short headline vs specific, detailed headline

- Generic messaging vs quantified results (“Increase Conversions” vs “Increase Conversions by 27%”)

Often, clarity beats creativity. Testing different angles of your value proposition can dramatically influence how quickly visitors understand your offer.

2. Call-to-Action (CTA)

Your CTA is the moment of commitment. Small changes in wording or design can shift conversion rates meaningfully.

Test:

- “Start Free Trial” vs “Get Instant Access”

- First-person vs neutral phrasing (“Start My Trial” vs “Start Your Trial”)

- High-contrast button vs subtle design

- Single CTA vs repeated CTA sections

The goal is reducing hesitation and reinforcing the benefit of clicking.

3. Form Structure and Friction

Forms are one of the biggest conversion bottlenecks.

You can test:

- Short form (name + email) vs longer qualification form

- Multi-step form vs single long form

- Optional fields vs required fields

Shorter forms usually increase submission volume, but longer forms may improve lead quality. That’s why it’s important to track not just conversions, but downstream performance.

4. Hero Section (Image, Video, or Layout)

The hero section sets the tone and emotional context of the page.

You might test:

- Product screenshot vs lifestyle imagery

- Static image vs explainer video

- Minimal layout vs information-rich header

Visual presentation affects trust and comprehension more than many marketers expect.

5. Social Proof and Trust Signals

Trust reduces resistance.

You can test:

- Testimonials above the fold vs below

- Video testimonials vs written quotes

- Adding client logos or certifications

- Displaying ratings or case study data

In competitive markets, social proof can significantly improve performance. In simpler offers, too much proof can create distraction. Testing reveals the balance.

6. Offer Framing and Pricing

If your landing page includes pricing or incentives, presentation matters.

Test:

- Monthly vs annual pricing emphasis

- Showing savings percentages

- Limited-time bonus vs standard offer

- Anchored pricing (“Was $99, Now $59”)

Sometimes the framing of value impacts conversions more than the price itself.

7. Page Length and Section Order

There is no universal rule for short vs long landing pages.

You can test:

- Long-form persuasive copy vs concise summary page

- Benefits before features vs features before benefits

- FAQ placement above vs below testimonials

Complex or expensive offers often require more explanation. Simple offers benefit from speed and clarity.

Strategic Priority

If you’re unsure where to begin, start with elements closest to the conversion decision:

- Headline

- CTA

- Offer clarity

- Form friction

Testing these areas typically produces the largest impact before moving into design refinements.

The key isn’t testing everything, it’s testing what meaningfully influences behavior.

How to Run an A/B Test

Running an A/B test is simple in theory, but precision matters. A poorly structured test can lead to misleading conclusions and wasted traffic.

Here’s a disciplined step-by-step process.

1. Define a Clear Goal

Start with one primary conversion metric. This could be:

- Form submissions

- Demo bookings

- Purchases

- CTA clicks

Avoid testing without a defined success metric. If you don’t know what “winning” means, the test has no direction.

2. Form a Hypothesis

Your hypothesis should connect a change to an expected outcome.

For example:

If we reduce the form from six fields to three, conversions will increase because friction decreases.

A strong hypothesis prevents random testing and keeps experimentation strategic.

3. Change Only One Variable

Create a variation where only one element differs from the original page.

This ensures you can confidently attribute performance changes to that specific modification. Changing multiple elements at once reduces clarity.

4. Split Traffic Randomly

Divide traffic between the original (control) and the variation.

In most cases, a 50/50 split is ideal. Ensure:

- Visitors are randomly assigned

- Returning users see the same version

- Traffic sources remain consistent

Consistency preserves data integrity.

5. Run the Test Long Enough

Stopping a test too early is one of the most common mistakes.

Wait until you reach:

- A sufficient sample size

- At least 95% statistical confidence (in most marketing contexts)

- Coverage across typical traffic cycles (weekdays/weekends)

Small early lifts often disappear once more data is collected.

6. Analyze and Implement

Compare the primary metric between the two versions.

If the variation shows a statistically significant lift, it becomes the new control. If not, retain the original and test a different variable.

Optimization is iterative. One test rarely transforms performance; consistent testing does.

A/B testing isn’t about guessing better. It’s about building a repeatable system for improving conversion rates through controlled experimentation.

A/B Testing Metrics for Landing Pages

The success of an A/B test depends on measuring the right metric. Choosing the wrong metric can lead to misleading wins that don’t actually improve business outcomes.

1. Conversion Rate (Primary Metric)

This is the most common metric in landing page testing.

Conversion Rate = (Conversions ÷ Total Visitors) × 100

For example, if 50 out of 1,000 visitors convert, your conversion rate is 5%.

This metric tells you how effectively your page turns visitors into leads or customers. For most landing pages, this should be the primary measurement.

2. Click-Through Rate (CTR)

CTR measures how many users click a specific button or link.

CTR = (Clicks ÷ Visitors) × 100

This is useful when testing:

- CTA button copy

- Placement changes

- Navigation adjustments

However, a higher CTR doesn’t always mean better final conversions. It should support — not replace — conversion rate tracking.

3. Revenue Per Visitor (RPV)

For e-commerce or paid campaigns, revenue per visitor provides deeper insight.

RPV = Total Revenue ÷ Total Visitors

A variation may lower conversion rate but increase average order value, resulting in higher overall revenue. That’s why revenue-based metrics matter for sales-driven pages.

4. Form Completion Rate

This measures how many users who start filling out a form actually complete it.

It’s useful when testing:

- Form length

- Multi-step vs single-step forms

- Field order

High drop-off often signals friction or confusion.

5. Engagement Metrics (Supporting Signals)

These include:

- Bounce rate

- Time on page

- Scroll depth

While not direct conversion indicators, they help explain user behavior and validate test outcomes.

For example, a version with higher time on page but lower conversions may indicate confusion rather than engagement.

Choosing the Right Metric for Landing Page A/B Testing

Every A/B test should have:

- One primary metric (e.g., conversion rate)

- One or two supporting metrics (e.g., revenue or engagement signals)

Avoid optimizing for vanity metrics like clicks if they don’t align with the final business goal.

The best A/B tests measure impact, not activity.

How to Analyze Results

Collecting data is easy. Interpreting it correctly is what determines whether your A/B test actually improves performance.

Here’s how to analyze results properly.

1. Start With the Primary Metric

Look at the conversion metric you defined before launching the test.

Ask:

- Did Version B outperform Version A?

- By how much?

- Is the lift meaningful or marginal?

For example:

If Version A converts at 4% and Version B converts at 4.3%, that’s a 7.5% relative lift. The question is whether that difference is statistically reliable and business-relevant.

Small percentage lifts can be powerful at scale, but only if they’re real.

2. Check Statistical Significance

A lift means nothing without statistical confidence.

In most marketing experiments, a 95% confidence level is used as the benchmark. This means there’s only a 5% probability that the result occurred due to random chance.

Without sufficient sample size and confidence, early “wins” are often false positives.

Never declare a winner based on small data samples.

3. Compare Absolute Impact, Not Just Percentage Lift

Percentage lift sounds impressive, but always calculate the actual business effect.

Example:

- 0.5% lift on 1,000 visitors = 5 additional conversions

- 0.5% lift on 100,000 visitors = 500 additional conversions

Context determines importance.

4. Review Supporting Metrics

Before declaring a winner, check related signals:

- Did bounce rate increase?

- Did revenue per visitor decrease?

- Did lead quality change?

A version might increase form submissions but reduce downstream sales. That’s not a true win.

5. Consider Traffic Consistency

Ensure:

- Traffic sources were evenly distributed

- No major campaign changes occurred mid-test

- Seasonality or weekday effects didn’t skew results

External factors can distort performance.

6. Decide: Implement, Extend, or Discard

After reviewing data:

- Clear winner with significance → Implement

- Inconclusive results → Extend test

- Clear loser → Discard and test a new variable

Optimization is iterative. Every test generates insight, even when results are neutral.

Final Principle

A/B testing isn’t about chasing percentage lifts. It’s about making statistically sound decisions that improve real business outcomes.

The goal is not just more conversions, it’s smarter optimization.

Common A/B Testing Mistakes on Landing Pages

A/B testing seems simple, but most failed experiments don’t fail because of bad ideas, they fail because of poor execution. Small analytical mistakes can invalidate results, waste traffic, and lead to incorrect optimization decisions.

Here are the most common errors marketers make when running landing page A/B tests.

Stopping the Test Too Early

This is the most frequent and most damaging mistake.

Early results often show large lifts because of small sample sizes. A version may appear to be “winning” after a few hundred visitors, but as more traffic flows in, the difference shrinks or reverses entirely.

Declaring a winner without sufficient sample size and statistical confidence leads to false positives. In most marketing scenarios, waiting for at least 95% statistical confidence and enough conversions per variant is essential. Impatience distorts data.

Testing Multiple Changes in One Variation

When you change the headline, CTA, layout, and form at the same time, you may see a performance shift, but you won’t know why.

Was it the headline?

The CTA?

The shorter form?

Without isolating a single variable, you lose the ability to draw reliable conclusions. Structured experimentation requires discipline. One variable per test keeps the cause and effect clear.

Optimizing for the Wrong Metric

Not all improvements are real improvements.

For example, a new CTA might increase click-through rate but reduce completed purchases. A shorter form might increase submissions but lower lead quality.

If your primary goal is revenue, optimize for revenue per visitor, not clicks. If your goal is qualified leads, measure downstream performance, not just form fills.

The metric you choose determines the decisions you make.

Running Tests on Insufficient Traffic

Low traffic does not mean you cannot test, but it does mean you must extend the testing period.

Running a test on a page with minimal visitors and calling a result after a few days creates unreliable conclusions. Statistical reliability depends on volume. Without adequate data, performance differences may simply reflect randomness.

Changing Conditions During the Test

If you modify ad targeting, adjust pricing, introduce promotions, or shift traffic sources mid-test, you contaminate the experiment.

A/B testing requires stable external conditions. If Version A and Version B are not exposed to similar audiences under similar circumstances, comparisons lose validity.

Controlled environment equals trustworthy results.

Ignoring Business Context

A statistically significant lift does not automatically mean meaningful impact.

For example, a 3% relative lift on a page with low traffic may generate minimal revenue impact. Conversely, a small lift on a high-traffic page can drive substantial growth.

Always evaluate results in terms of real business effect, not just percentages.

Peeking at Results Too Frequently

Constantly checking results and reacting emotionally to small fluctuations introduces bias.

Performance naturally fluctuates during a test. Looking too often can tempt premature decisions. Set a testing duration or conversion threshold before launching, and evaluate only once that threshold is reached.

Testing Without a Hypothesis

Random experimentation leads to random insights.

Each A/B test should begin with a reasoned hypothesis. Instead of “let’s try a different button color,” define the logic behind the change. A structured hypothesis makes the outcome interpretable and repeatable.

Treating A/B Testing as a One-Time Activity

A single winning test does not create optimization maturity.

High-performing landing pages are built through sequential experimentation. Each winning variation becomes the new control, and testing continues. Optimization compounds over time.

The difference between average and high-performing landing pages is not creativity, it is disciplined experimentation.

Avoiding these mistakes ensures your A/B testing produces reliable insights rather than misleading data.

A/B Testing Tools for Landing Page Optimization

Running effective A/B tests requires more than just splitting traffic. The right tool ensures accurate randomization, reliable statistical reporting, and clean data interpretation. Choosing incorrectly can lead to misleading results, even if your hypothesis is strong.

Here’s how to think about A/B testing tools based on maturity level and use case.

Landing Page Builders with Native A/B Testing

Tools like Unbounce and Leadpages combine page building and testing inside one platform. You create variations directly in the editor and allocate traffic between them.

These are ideal when:

- You primarily test landing pages

- You don’t have developer support

- You want quick deployment

Strength: Simplicity and speed.

Limitation: Less flexibility for advanced experimentation across full websites.

Analytics-Integrated Testing Tools

Tools that integrate deeply with analytics platforms (such as Google-based testing environments or GA4-connected tools) allow you to use existing conversion goals and revenue tracking.

These are useful when:

- You already track detailed goals in analytics

- You need cross-session attribution

- You want deeper behavioral analysis

Strength: Better data continuity.

Limitation: Requires accurate analytics setup to avoid distorted results.

Enterprise Experimentation Platforms

Platforms like Optimizely, VWO, and Convert offer advanced experimentation, including server-side testing, segmentation, personalization, and multi-page tests.

These tools are appropriate when:

- You have high traffic

- You run experiments across product flows, not just landing pages

- You need segmentation by device, behavior, or audience cohort

Strength: Precision and scale.

Limitation: Higher cost and technical complexity.

Client-Side vs Server-Side Testing

This distinction matters for serious optimization.

Client-side testing modifies the page after it loads in the browser. It’s easier to implement but can cause slight flicker effects.

Server-side testing delivers the variation before the page renders. It’s more technically complex but cleaner and more reliable, especially for high-scale experiments.

For most landing page optimization, client-side testing is sufficient. For product or pricing experiments at scale, server-side is more robust.

What to Look for in an A/B Testing Tool

Regardless of platform, ensure the tool provides:

- True random traffic allocation

- Statistical confidence reporting

- Returning visitor consistency

- Goal tracking tied to business metrics

- Segmentation by device and traffic source

Avoid tools that show percentage lifts without explaining confidence levels or sample size requirements.

Choosing the Right Tool

- If you test landing pages occasionally → use a builder with native testing

- If you optimize marketing funnels → use analytics-integrated tools

- If you run structured experimentation programs → use enterprise platforms

The best tool is not the most advanced; it’s the one that matches your traffic volume, technical capability, and testing maturity.

Conclusion

A/B testing isn’t about chasing quick wins; it’s about building a disciplined optimization system. Small, statistically sound improvements compound over time and turn average landing pages into high-performing assets.

When you define clear goals, test one variable at a time, measure the right metrics, and analyze results properly, optimization becomes predictable, not guesswork.

The advantage doesn’t go to the most creative marketer. It goes to the most consistent experimenter.

Frequently Asked Questions?

How long should you run an A/B test?

An A/B test should run until it reaches statistical significance — typically 95% confidence — and enough conversions per variation to ensure reliability. The required duration depends on your traffic volume. Low-traffic pages may need several weeks to produce dependable results.

What is a good sample size for A/B testing?

There is no universal number, but each variation should receive enough visitors to produce stable data. Sample size calculators can estimate the required traffic based on your current conversion rate and expected lift. Testing with very small datasets increases the risk of false positives.

Can you test more than one element at a time?

Yes, but it changes the test type. Testing multiple elements simultaneously becomes a multivariate test, which requires higher traffic and more complex analysis. For most landing pages, testing one variable at a time provides clearer insights.

What confidence level should you use in A/B testing?

Most marketing experiments use a 95% confidence level as the benchmark. This means there is only a 5% chance the observed difference occurred randomly. Higher confidence increases reliability but may require longer test durations.

Does A/B testing affect SEO?

A/B testing does not harm SEO when implemented correctly. Ensure search engines index only one canonical version of the page and avoid cloaking or serving different content to bots. When done properly, A/B testing can improve engagement and conversion performance without SEO risk.

What is the difference between A/B testing and multivariate testing?

A/B testing compares two versions of a page with one change between them. Multivariate testing evaluates multiple elements and combinations simultaneously. A/B testing is simpler and more practical for most landing pages.

How many A/B tests should you run at once?

Avoid running overlapping tests on the same traffic unless they are carefully segmented. Multiple simultaneous tests can interfere with each other and distort results. Sequential testing is typically more reliable.